数据损坏和丢失¶

数字数据丢失的主要因素¶

数字数据可能因存储介质的唯一副本被盗或在火灾中丢失而丢失(这种丢失与传统纸质副本或底片的丢失相同)。此外,这里我们将讨论其他数字数据丢失的来源,大致可分为以下几类:

存储介质的物理老化(所有介质都以不同的速度老化)。

数据传输过程中未检测到的错误。

有些专有数字格式缺乏长期支持。

老旧硬件。

全球最大的数据恢复公司 Kroll Ontrack 有一些关于数据丢失实际原因的统计数据:

数据丢失原因 |

认知 |

现实 |

|---|---|---|

硬件或系统问题 |

78% |

56% |

人为失误 |

11% |

26% |

软件损坏或出问题 |

7% |

9% |

电脑病毒 |

2% |

4% |

灾害事件 |

1-2% |

1-2% |

让我们逐步分析这些情况。

存储介质老化¶

下面列出的设备按数据访问速度从慢到快排序。

磁带存储¶

磁带通常用于专业备份系统,但很少用于家庭系统。磁带存在长期数据保留问题,可能被强磁场损坏,且磁带技术不断变化。然而,在某些方面,磁带比光盘更安全:它们不易受划痕、灰尘和写入错误的影响。为避免长期数据保留问题,磁带应每5-8年重新复制一次,否则过多的比特错误将使校验和保护无法纠正。磁带的缺点是磁带驱动器昂贵,数据恢复时间可能比硬盘长20倍。磁带备份系统最适合需要备份大量数据的大型专业环境。

光盘驱动器¶

你可能会惊讶地发现,许多 CD-R 的物理老化速度比胶片快。按理说,胶片的寿命可能比某些光学介质长几十年,但定期备份的数字介质永远不会丢失任何内容。胶片从创建和冲洗的那一刻起就开始衰减——数字的 1 和 0 不会。胶片永远不会保持与最初创建时相同的颜色和对比度,但数字介质不会这样。然而,数字介质容易损坏。

所有光学介质都容易出错,即使是刚写入的状态。这就是为什么它们受到占用磁盘有效存储空间 25% 的纠错码的强力保护。但即使有如此强大的保护,它们仍然会受到化学老化、紫外线照射、划痕、灰尘等影响而老化。

普通 CD 或 DVD 如果得到适当维护,其可信赖期限不应超过几年。你可以购买存档级CD 和 DVD,它们的寿命更长,但更难获得且价格更高。市面上有一些镀金光学介质,每片价格几欧元,宣称存储寿命为 100 年(如果你愿意相信的话)。

最终所有光盘都会变得无法读取,但你可以通过使用优质光盘、优质刻录机并以适当方式存储光盘来降低风险。最好的光盘刻录机比最便宜的贵不了多少,但它们以更可靠的方式写入。关键在于选择正确的刻录机。

对于损坏的光盘驱动器,可以参考维基百科上 `所有常见应用程序的列表 <https://en.wikipedia.org/wiki/Data_recovery#List_of_data_recovery_software> `_ ,这些程序旨在从损坏的软盘、硬盘、闪存介质(如相机存储卡和USB驱动器)等设备中恢复数据。

双层光学介质蓝光光盘可以存储 50 GB,几乎是双层 DVD 8.5 GB 容量的六倍。关于 CD/DVD 的所有内容同样适用于蓝光光盘。

最佳实践:使用优质刻录机在存档级介质上以开放的、非专有格式缓慢刻录光盘。读取数据以进行验证。用描述性文本、日期和作者标记它们。将它们存放在清洁、黑暗、防动物且干燥的地方。并且不要忘记在扔掉最后一件能够读取它们的硬件或软件之前,将它们复制到下一代介质上。

硬盘¶

硬盘驱动器(HDD)制造商对其统计数据保密。制造商的保证可以让你获得新硬盘,但无法保证它能使用多久。存储提供商 Backblaze 在2023年基于237,278块硬盘的库存报告称,年故障率为1.5%。谷歌对硬盘故障机制进行了大规模研究:HDD failure mechanisms: Disk Failures study.

In a nutshell: Disks run longest when operating between 35°C and 45°C. It may seem counter-intuitive, but HDD failure rates increase dramatically at lower temperatures. Controller parts (electronics) are the foremost sources of failure, an error source that SMART does not diagnose. Some SMART errors are indicative of imminent failure, in particular scan errors and relocation counts. At this time, lifetime expectancy is 4-5 years.

一般来说,与直觉或生态考虑相反,持续运行硬盘比频繁开关机寿命更长。甚至有报道称,快速停转硬盘的激进电源管理会缩短硬盘寿命。因此,硬盘最糟糕的因素可能是振动、冲击和低温。

如果你的硬盘开始发出奇怪的声音,普通文件恢复软件将无济于事。立即进行快速备份。(如果可能,使用 dd 工具,而不是普通文件备份,因为 dd 从开始到结束以平滑的螺旋流读取,不会对机械部件造成压力。)有一些专业公司可以从故障硬盘中恢复数据,但这一过程非常昂贵。

Linux 的 SmartMonTools 套件允许你查询存储硬件设备的未来故障。我们强烈建议在你的计算机上使用此类工具。

固态硬盘¶

SSD 在机械上比 HDD 更坚固且速度更快。随着容量和价格变得更具竞争力,SSD 正在取代 HDD,使其成为永久数据存储设备的越来越好的解决方案。

存储提供商 Backblaze 在 2023 年基于 3,144 块 SSD 的库存报告称,年故障率为 1%。因此,SSD 比 HDD 更好,但也并非 100% 可靠。

当 SSD 用作外部设备时,数据丢失(通常可恢复)的一个主要原因是未安全地从计算机中移除SSD。在数据从计算机内存保存到任何连接的设备之前,它会在缓冲区中存储一段时间。对于硬盘来说,这最多意味着几秒钟,而对于 SSD,则可能是几十分钟。因此,在断开闪存设备之前,始终通过使用操作系统的 安全移除设备 功能确保数据缓冲区已被清空。

非易失性存储器(NVMe)¶

非易失性存储器(NVMe) 是一种用于访问连接到PCI Express(PCIe)总线的计算机非易失性存储介质的逻辑设备接口。它使用与 SSD 相同的超快NAND 闪存,但使用 M.2 卡接口,而不是旧硬盘使用的较慢的 mSATA。

NVMe 允许主机硬件和软件充分利用现代 SSD 中可能的并行级别。NVMe 减少了 I/O 开销,并带来了相对于以前 SSD 的各种性能改进。mSATA 接口协议是为速度慢得多的硬盘开发的,在请求和数据传输之间存在非常长的延迟,且数据速度远低于 RAM 速度。

由于 NVMe 设备使用与 SSD 相同的硬件存储数据,所以它们的可靠性肯定是相似的。

重要

In all cases, SSDs or NVMe as internal devices are the more modern and efficient solution to host the digiKam databases and your image collections.

电源故障¶

电源浪涌¶

每年约有 1% 的计算机受到雷电和电源浪涌的影响。

本节讨论因电源浪涌导致的数据完全丢失。当然,偶尔也会因保存文件时断电而导致数据丢失,但这些损失通常可以轻松恢复。

不要等到下一次雷暴来临的时候,才担心电力突然波动对计算机系统的影响。最新统计显示, 63% 的电子设备损坏是由电源问题引起的,且大多数计算机每天会遭遇两次或更多电源异常。由于电源浪涌或断电可能随时随地发生,投资某种浪涌保护设备来保护计算机是明智之举。

浪涌如何发生¶

当电源线电压在超过 10 毫秒的时间内高于标称值时,就会发生电源浪涌。60% 的浪涌是由家庭或办公室内部的设备(如洗衣机、冰箱或水泵)关闭时,其使用的电力作为多余电压转移到其他地方引起的。其余 40% 的浪涌则由雷电、电网切换、线路短路、劣质布线等因素引发。

尽管大多数电子设备不受浪涌影响,依赖计算机芯片和高速微处理器的设备却容易受到严重损坏。进入计算机的电源异常可能导致键盘锁死、数据完全丢失、硬件性能下降、主板损坏等。不采取保护措施可能导致时间和金钱的双重损失。

浪涌保护器¶

最常见的浪涌防护设备是浪涌保护器或抑制器,它的工作原理是,吸收部分多余能量并将其余部分导入地线。这些设备通常以电源排插的形式出现(一种带有六个左右插座和单个接地插头的长条设备)。但请注意,并非所有电源排插都具备浪涌保护功能。

选择浪涌保护器时,需确保其符合 UL 1449 标准,该标准对最低保护能力做出了要求。还应选择提供防雷保护(并非所有设备都有)并为正确连接的设备提供保险的产品。

由于浪涌可能通过任何路径进入计算机,请确保连接到系统的每个外围设备都受到保护,包括电话线或电缆调制解调器。一些制造商现在生产带有电话插孔和电源插座的浪涌抑制器,另一些则为使用电缆调制解器或电视调谐卡的用户提供同轴电缆插孔。

如果你使用笔记本电脑,建议随身携带浪涌抑制器。市面上有多种专为笔记本设计的小型抑制器,带有电源和电话插孔,非常适合外出使用。

不间断电源(UPS)¶

虽然浪涌抑制器可以保护系统免受电源线路的轻微波动影响,但在断电时却无能为力。即使仅几秒的停电也可能导致宝贵数据丢失,因此投资一台 不间断电源(UPS) 是值得的。

除了作为浪涌抑制器,这些设备在断电时会自动切换到电池供电,让你有机会保存数据并关闭系统。某些型号甚至允许你继续工作直到电力恢复。购买 UPS 时,确保其具备浪涌抑制器的品质,并检查电池寿命和附带的软件。

考虑到计算机系统的潜在风险,确保其免受电源干扰是一项值得的投资。一台优质的浪涌抑制器或 500W UPS 并不昂贵,却能让你安心。至少,在度假时考虑断开计算机的所有连接线。

保护策略¶

网络存储服务¶

亚马逊网络服务(AWS)包括 S3(简单存储服务)。通过适当配置,你可以在 Linux、Mac 和 Windows 系统上将 S3 挂载为驱动器,将其用作备份目的地。Google Drive 是另一种流行的云存储服务,可存储无限量的数据。

与家用硬盘相比,云存储成本较高,且需要通过相对较慢的互联网传输图像。但我们认为,云存储可以有效防止最重要图像的本地数据丢失。

Google 相册和 Flickr 提供专门用于照片的在线存储服务。免费空间有限,因此不建议存储全分辨率图像,但付费账户提供更多存储空间。

就数据保留而言,基于网络的解决方案可能非常安全。传输错误会自动纠正(得益于TCP协议),且大公司通常具备备份和分布式存储,使其自身具备抗灾能力。

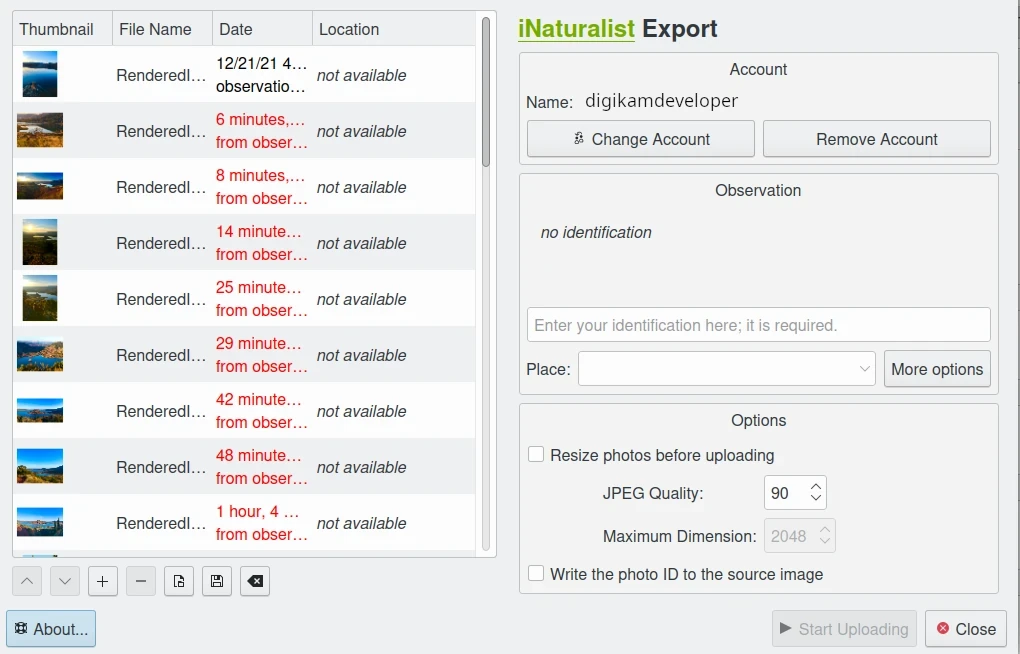

digiKam 提供导出工具至 iNaturalist 网络服务¶

传输错误¶

数据丢失不仅发生在存储设备上,数据在计算机内部或跨网络传输时也可能丢失(尽管网络流量本身通过 TCP 协议具有错误保护功能)。计算机内部总线和内存芯片偶尔会发生错误。消费级硬件没有针对随机比特错误的保护措施,但监控和纠正错误的技术是存在的。你可以购买支持 ECC(纠错码)的内存,搭配支持 ECC 的主板使用,尽管价格较高。 ECC RAM 至少可以监控并纠正单比特错误,双比特错误可能无法检测,但其发生频率极低,无需担心。

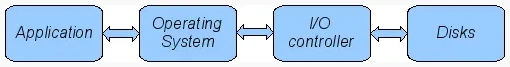

应用程序与存储介质之间的数据传输流程¶

下图展示了计算机中的传输链元素,所有传输环节都可能发生错误。Linux 的 ZFS 和 BTRFS 文件系统旨在确保从操作系统到磁盘路径的完整性。

内存和传输通道的比特错误率(BER)约为1万亿分之一(每比特 1E-12)。这意味着每 3000 张30MB 的图像中就有 1 张因传输问题出错。错误对图像的影响是随机的:可能导致图像损坏,或某个像素值改变。但由于几乎所有图像都使用压缩技术,单个比特错误的影响无法预测。

最糟糕的是,硬件通常不会在传输或内存错误发生时发出警告。这些故障会悄无声息地发生,直到某天你打开照片时才发现它已损坏。令人担忧的是,计算机内部对传输错误几乎没有保护措施。讽刺的是,互联网(TCP 协议)作为数据传输路径比计算机内部更安全。

不稳定的电源是传输丢失的另一个来源,因为它们会干扰数据流。对于许多普通文件系统,这些错误可能不会被察觉。

文件系统的未来¶

有两种能够从底层处理磁盘错误的候选方案,Oracle 的 ZFS 似乎是其中之一,且具有高度可扩展性。它是开源的,但受专利保护,采用与 GPL 不兼容的许可证,可在 Linux 和 macOS 上使用。

Oracle还推出了 BTRFS 文件系统,采用与ZFS相同的保护技术,适用于Linux。

人为错误¶

盗窃与事故¶

不要低估因盗窃或事故导致数据丢失的可能性。这两大因素占笔记本数据丢失的 86% 和台式机系统的 46%。仅盗窃一项就占笔记本数据丢失的 50%。

恶意软件¶

病毒导致的数据丢失没有普遍认为的那么严重。其造成的损害低于盗窃或系统重装。尽管恶意软件过去主要针对微软操作系统,但针对 Linux 和 Apple 系统的攻击频率正在增加。

人为与数据丢失¶

人为错误是数据丢失的主要原因之一。人们确实会犯一些愚蠢的错误,比如从 RAID 阵列中拔错硬盘,或格式化硬盘导致信息全部丢失。行动前不思考对数据是危险的。

当出现问题时,深呼吸并保持冷静。最佳方法是在犯下可能导致重大数据丢失的错误之前,就制定计划,并向非专业人士(尤其是女性)解释你的计划。你会惊讶地发现,仅仅是制定计划并向他人解释,就能避免许多愚蠢的错误。

如果硬盘开始发出奇怪的声音,普通文件恢复软件将无济于事。立即进行快速备份。如果硬盘仍在运转但找不到数据,可以尝试数据恢复工具并将数据备份到另一台计算机或驱动器。 CloneZilla 开源套件 是一个通用且强大的解决方案。关键是将数据下载到另一个驱动器(如另一台计算机、U 盘或硬盘),并将恢复的数据保存到另一个磁盘。在 Linux 系统上, dd 工具 是你的好帮手。

常见误区澄清¶

我们想澄清一些常见误区:

开源文件系统比专有系统更不易丢失数据:错了。NTFS 比 ext4、ReiserFs、JFS、XFS 等主流文件系统略胜一筹。

日志文件系统可防止数据损坏/丢失:错了。它们仅加快操作中断时的扫描速度并避免模糊状态。如果文件在中断前未完全保存,仍会丢失。

RAID 系统可防止数据损坏/丢失:基本错误。 RAID 0 无冗余,反而更容易丢失数据;RAID 1 通过镜像防止单盘读取故障,但无法防止其他故障; RAID 5 可防止磁盘故障导致的数据丢失,但无法避免文件系统或 RAID 控制器错误。许多低端RAID控制器(比如大多数主板自带的控制器)根本不会报告问题,它们觉得反正你也发现不了。就算你几个月后真发现了问题,又有多大可能知道是控制器搞的鬼呢?这里有个很阴险的问题——RAID 5 校验数据的损坏。检查普通文件很简单,读一读再比对元数据就行。但校验数据的检查可就麻烦多了,所以通常要到重建阵列时才会发现校验错误。到那时候,不用说,一切都晚了。

病毒是数字数据的最大威胁:错了。盗窃和人为错误才是数据丢失的主因。

存储容量估算¶

数码相机的感光元件性能已经接近物理极限,只剩下1-2档光圈的提升空间了。说白了就是:技术发展终有天花板,现在任何感光元件的灵敏度和噪点表现都快摸到顶了。

现在主流相机动不动就标榜 5000 万像素,但实际成像效果未必能体现这个分辨率。考虑到传感器尺寸和镜头素质,紧凑型相机 1200 万像素就够用了,单反相机在 2000-2400 万像素也基本到顶。想要更高分辨率,就得用全画幅(24x36mm)甚至更大尺寸的传感器。

所以别看厂商整天吹嘘高像素,可以预见未来大部分相机的像素都不会超过 3000 万。这样就能估算每张照片的存储需求:单张不超过 40MB。就算引入文件版本功能(把同一照片的不同版本归为一个文件),新趋势也只是记录修改指令,而不是保存完整副本,所以每个版本只需存储少量数据。

要估算你需要多少存储空间,很简单:用 digiKam 的时间轴侧边栏 查查你每年拍多少张照片,然后乘以 40MB 就行。大多数人每年留存的照片不到 2000 张,80GB 空间就够用。假设你每 4-5 年换一次硬盘(或未来的存储介质),存储容量的自然增长完全能跟上你的需求。

至于那些需要更大空间的摄影发烧友——可能要大得多——建议直接上文件服务器。现在主板都集成千兆网卡,局域网传文件快得很。如果不需要那么大数据量,可以考虑支持高速 SSD 的新款主板。通过雷电 5 接口连几 TB 的高速 SSD,能让你的图库飞起来。

数据备份与恢复¶

每年有 6% 的电脑会遭遇数据丢失。既然已经提醒过你了,到时候可别怪别人。现在几 TB 的机械硬盘和固态硬盘都不贵,赶紧买一个,定期做备份。特别提醒:在重装系统、换硬盘、调整分区这些危险操作前,一定要先备份并测试备份是否可用。

防灾指南¶

比方说,你每天都用外接 SATA 硬盘认真备份。哪怕某一天发生了雷击事件,你的数据也不会丢失——除非你像大多数人一样,总把外接硬盘连着电脑不拔。

本地灾害往往会造成连锁损失。别光担心空难,火灾、水淹、电涌、熊孩子和小偷都够你受的。家里出事经常是整个房间甚至整栋房子遭殃。

所以真正的防灾要领是分散存储。定期轮换备份盘,可以把备份放在楼上、父母家,或者办公室。

分散备份还有个好处:人在慌乱时容易误删数据,连备份都可能遭殃。把备份放在别处,能让你冷静下来避免手贱。

备份类型详解¶

Full Backup: A complete backup of all the files being backed up. It is a snapshot without history, representing a full copy of your data at one point in time.

差异备份 :只备份上次全量备份后变动的文件,相当于记录两个时间点的完整差异

增量备份 :只备份上次备份后变动的文件,形成多个快照链。可以还原到任意备份时间点,类似版本控制但不连续

备份数据¶

最佳备份方案是

先用外置存储做 全量备份 。

校验 数据完整性 后收起来(注意分散存放)。

准备另一个存储设备做 日常定期备份 。

每隔两个月 轮换 一次备份设备,记得先校验。

神器推荐¶

Linux的 rsync 工具非常好用:只传输变动部分,支持压缩,还能走 ssh 加密。比写 FTP 脚本省心多了。

合理的图片备份方案:

重要照片导入电脑后立即刻盘。

工作区每天做增量备份。

每周做差异备份,删除两周前的完整备份。

每月做差异备份,删除两个月前的备份。

如果你备份盘之前都放在同个地方,现在立刻分开放。

这套方案能确保:有充足的时间发现数据损失,并可以在需要的时候完全恢复。同时也可以保留最近7-14天的每日版本、至少一个月的周度快照、完整的月度快照,并且备份总量不超过工作区的130%。其他任何的存储瘦身操作,都应该在完整验证之后,手动操作。

你还应该考虑如何在技术和所有权变更时保护你的照片。

为了 让珍贵的照片能够传承一两代人 ,需要遵循两个原则:

跟上技术发展 ,不要落后超过两三年。

将照片保存为开放、 非专有的标准格式 。

跟上技术发展¶

虽然本质上说,未来不可预测,但技术进步似乎肯定会持续。每隔 5 到 8 年,你都应该考虑当前系统的向后兼容性问题。我们过去使用的变体越少,未来需要回答的问题就越少。

当然,每次更换计算机系统(机器、操作系统、应用程序、数字版权管理)时,你都必须问自己同样的问题。今天,如果你想转用 Windows 系统,你必须反复确认是否还能导入你的照片,更重要的是,是否还能将它们转移到其他系统或机器上。如果你被专有系统束缚,很可能就无法实现。我们看到很多人因为 Windows 强制执行严格的数字版权管理制度而苦苦挣扎。你如何向 Windows 证明你实际上是照片版权的所有者?

解决这个问题的办法是只使用受多种应用程序支持的开放标准。

虚拟机现在已经普及。所以如果你有一个对读取照片很重要的旧系统,请保留它,以便以后可以将其安装为虚拟机。

要是你不想这么麻烦,那么建议很简单:每次更换计算机架构、存储和备份技术或文件格式时,都要检查你的照片库,必要时转换为更新的标准。并且坚持使用开放标准。

可扩展性¶

可扩展性是技术极客用来描述系统调整大小的能力的术语,通常意味着向上扩展。

假设你计划了可扩展性,并将照片集合存储在一个容器中,你想将其扩展到一个单独的磁盘或分区。在 Linux 系统上,你可以将容器复制并调整大小到新磁盘上。

使用开放文件格式¶

过去 20 年数字时代的短暂历史一再证明,当你希望数据在未来 10 年仍可读时,专有格式不是正确的选择。微软是最著名的专有格式提供者,因为它毕竟市场份额占主导地位。但其他这种公司可能不太行了,因为它们可能在市场上停留的时间不够长,或者只有很少的用户或贡献者。就微软而言,至少有一个优势是,有很多人共享同样的问题。这使得你更有可能找到涉及他们专有格式的问题的解决方案。然而,仍然常见的是,任何版本的 MS Office 套件都无法正确读取两个大版本之前用 Office 套件创建的文档。

幸运的是,图像格式通常比办公文档有更长的生命周期,并且受淘汰的影响较小。

开源标准的巨大优势在于拥有开放的规范。即使未来的某一天没有软件可以读取特定的文件格式,有人也可以仅根据规范重新创建这样的软件。

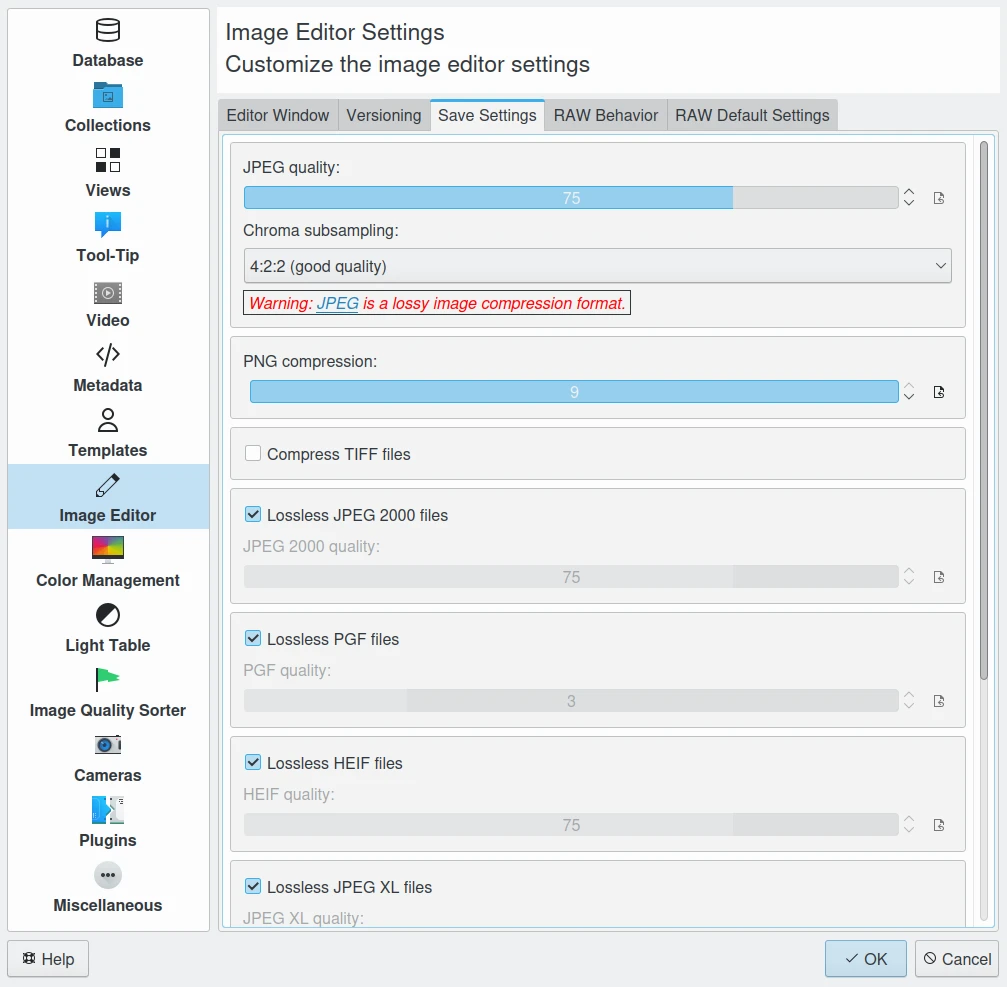

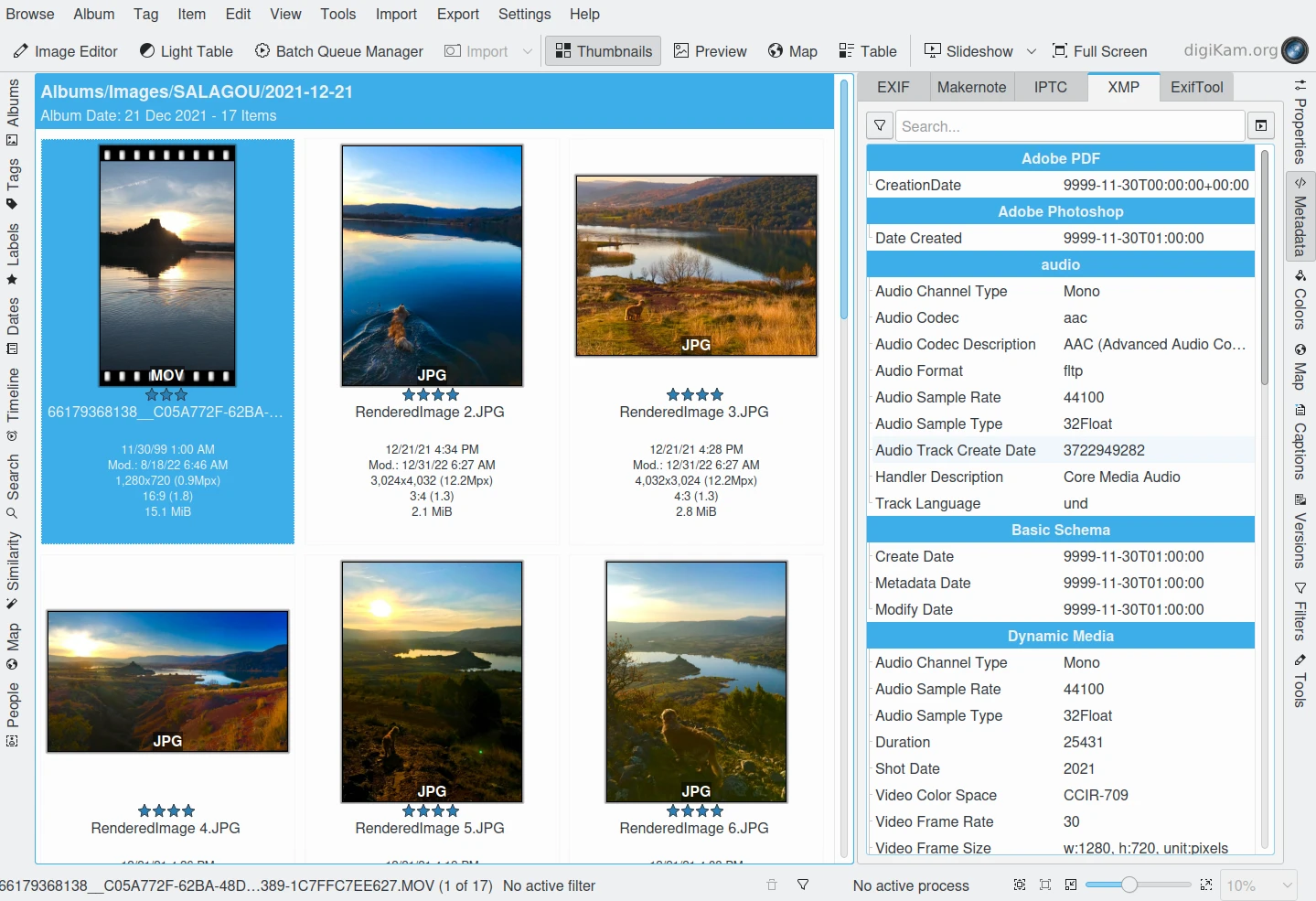

digiKam 图像编辑器常见图像格式的默认保存设置¶

JPEG 已经存在一段时间了。它是一种有损格式,每次对原始文件进行修改并保存时都会损失一些信息。从积极的方面来看,JPEG 格式无处不在,支持 JFIF、Exif、IPTC 和 XMP 元数据,具有良好的压缩率,并且可以被所有图像软件读取。由于其元数据限制、有损性质、缺乏透明度和8位颜色通道深度,我们不推荐使用它。JPEG2000 更好,可以无损使用,但用户基数较小。

GIF 是一种专有的专利格式,正在慢慢从市场上消失。不要使用它。

PNG 是作为替代 GIF 的开源标准发明的,但它能做的远不止于此。它是无损的,支持 XMP、Exif 和 IPTC 元数据,具有16位颜色编码和完全透明。PNG 可以存储伽马值和色度数据,以改善异构平台上的颜色匹配。它的缺点是文件相对较大(但比 TIFF 小)和压缩速度慢。我们推荐它。

TIFF 作为一种图像格式已被广泛接受。TIFF 可以以未压缩的形式存在,也可以在使用无损压缩算法(Deflate)的容器中存在。它保持了高图像质量,但代价是文件大得多。一些相机允许你以这种格式保存照片。问题是这种格式已经被许多人修改,现在有 50 多种变体,并非所有应用程序都能识别所有变体。

PGF for Progressive Graphics File is another not so well known but open file image format. Wavelet-based, it allows lossless and lossy data compression. PGF compares well with JPEG 2000 but it was developed for compression/decompression speed rather than compression ratio. A PGF file looks significantly better than a JPEG file of the same file size, while also remaining very good at progressive display. PGF format is used internally in digiKam to store compressed thumbnails in the database. For more information about the PGF format see the libPGF homepage.

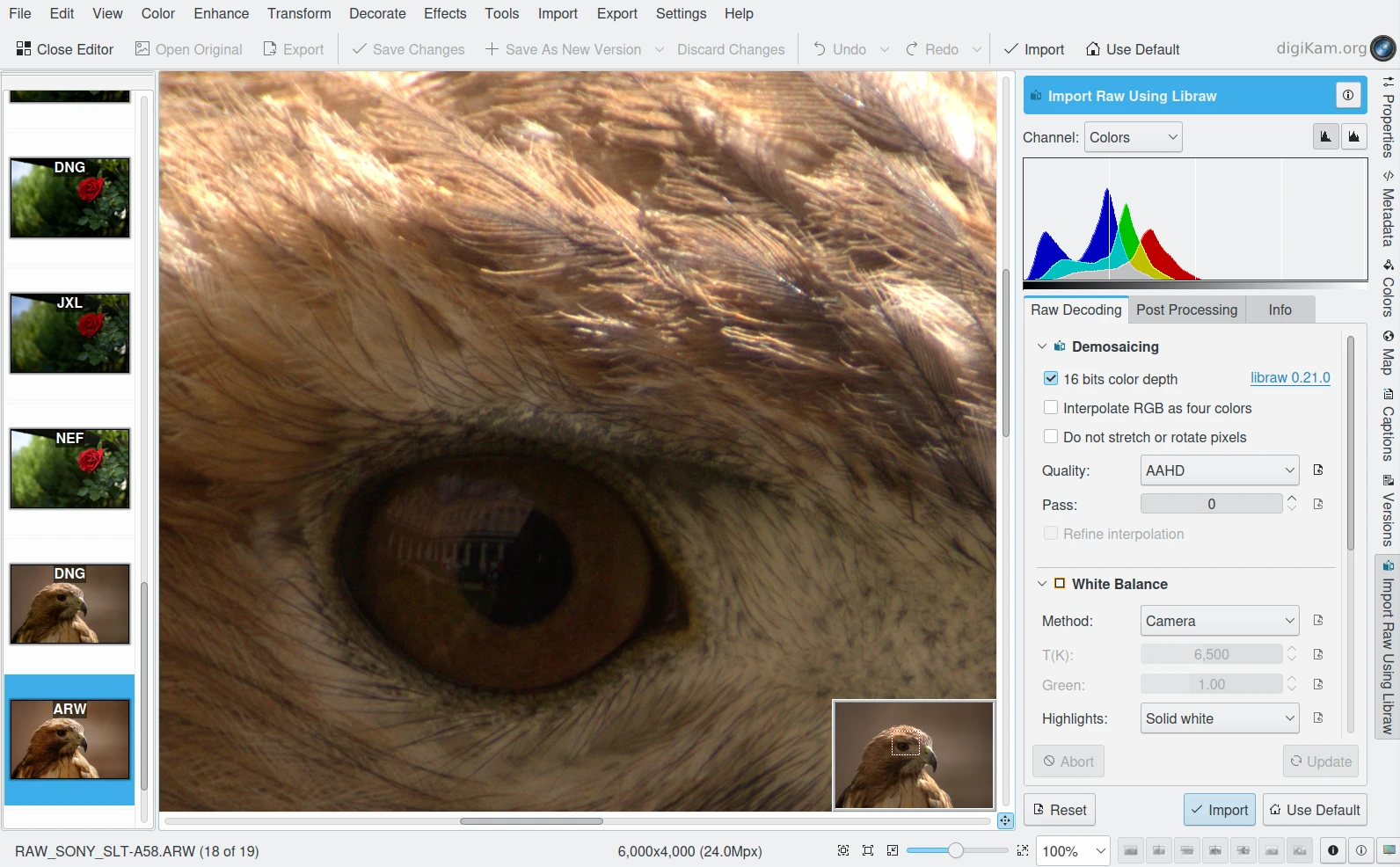

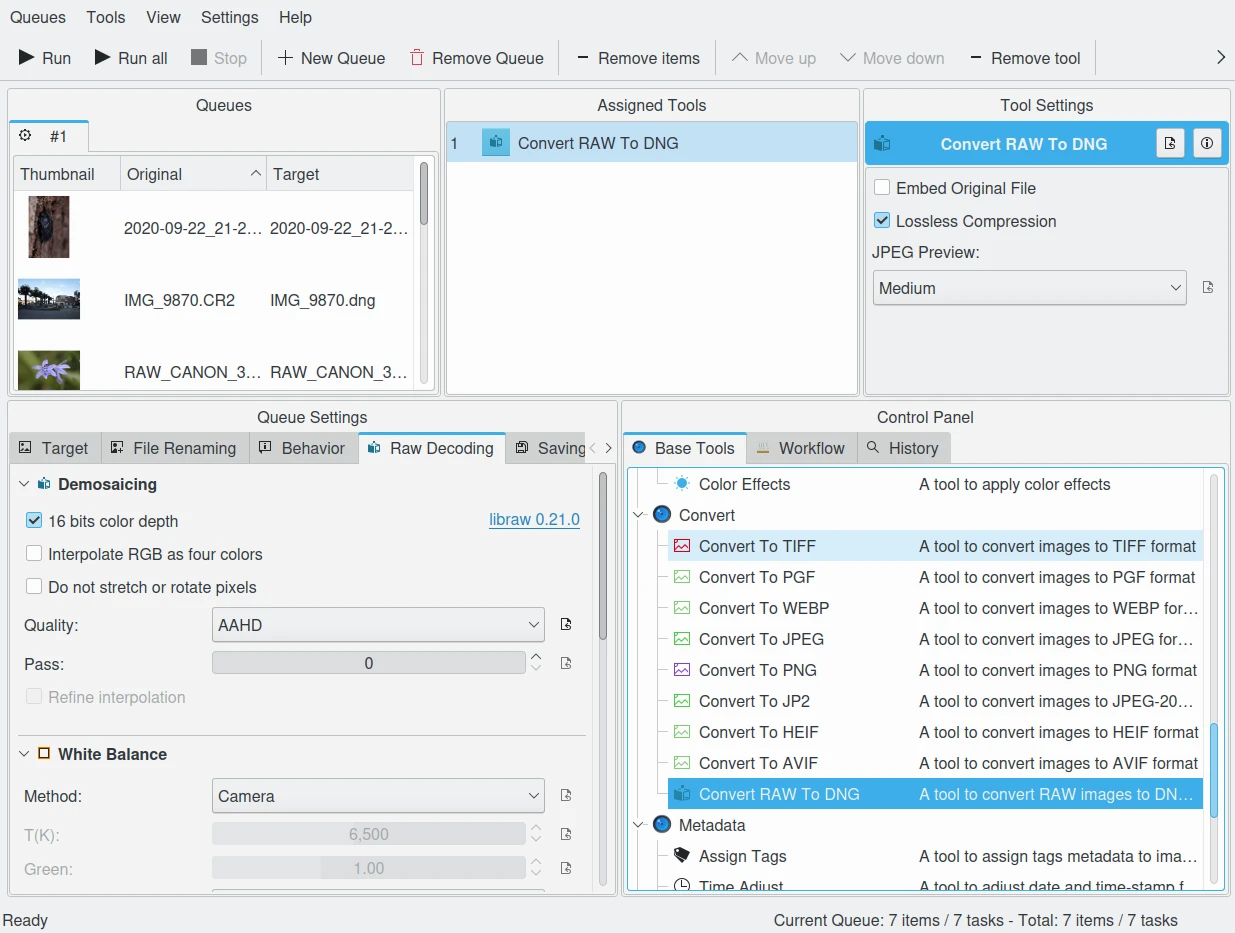

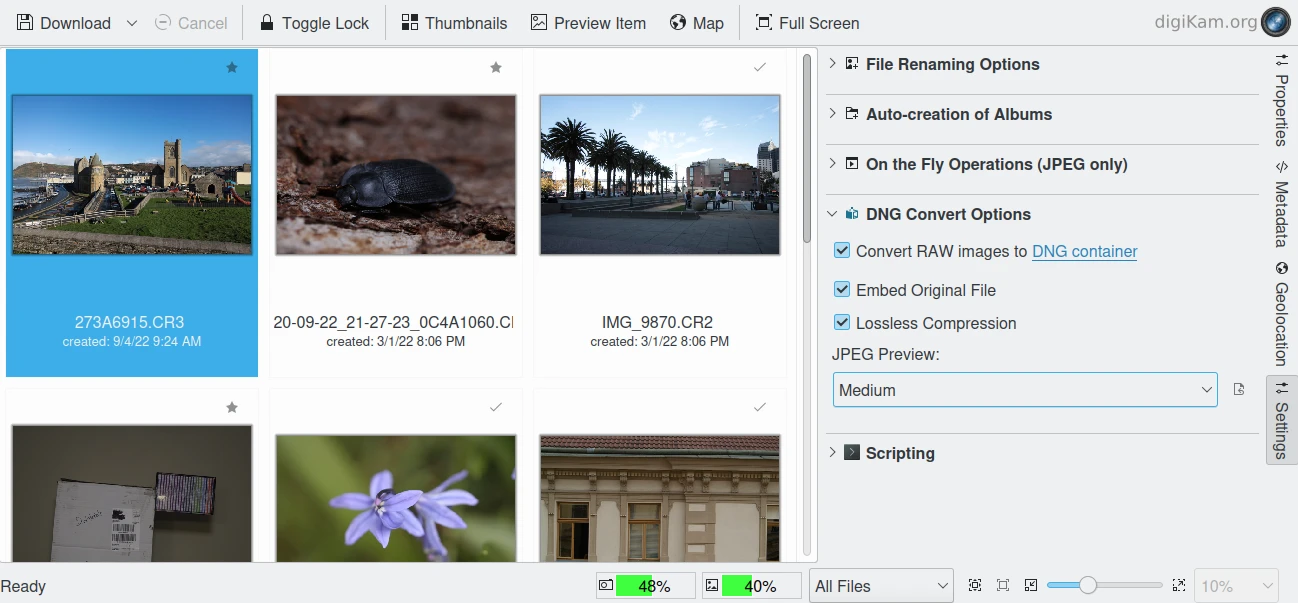

digiKam图像编辑器RAW导入工具¶

RAW 格式。一些更昂贵的相机支持以 RAW 格式拍摄。RAW 格式并不是真正的图像标准,它是一种容器格式,每个品牌和相机型号都不同。RAW 格式图像包含来自数码相机或图像扫描仪图像传感器的最小处理数据。原始图像文件有时被称为数字负片,因为它们与传统化学摄影中的胶片负片扮演相同的角色。具体来说,负片不能直接用作图像,但具有创建图像所需的所有信息。以相机的 RAW 格式存储照片可以提供更高的动态范围,并允许你在拍摄后更改设置,例如白平衡。大多数专业摄影师使用 RAW 格式,因为它为他们提供了最大的灵活性。缺点是 RAW 图像文件可能非常大。

我们建议你不要以 RAW 格式存档(注意说的不是拍摄,我们确实推荐以 RAW 格式拍摄)。以原生 RAW 格式存储图像没有任何好处。它们有很多种类,而且都是专有的。几年后,你很可能无法再使用旧的 RAW 文件。我们已经看到人们更换相机,丢失他们的颜色配置文件,并且在正确使用旧的 RAW 文件时遇到很大困难。我们建议你改用 DNG 格式。[ 译注:在我国出于版权保护需要,仍强烈建议保存重要的 RAW 格式文件。 ]

DNG(数字负片)格式是 Adobe 公司开发的一种免版税、开放的 RAW 图像格式。它解决了相机原始文件格式不统一的问题,基于 TIFF/EP 格式,并且强制要求使用元数据。目前已经有一些相机厂商采用了 DNG 格式,希望佳能、尼康这些大厂也能尽快跟进。从 iPhone 12 Pro Max 开始支持的 Apple ProRAW 格式就是基于 DNG 的。

我们强烈推荐将 RAW 文件转存为 DNG 格式。虽然它是 Adobe 开发的,但 DNG 是开放标准,深受开源社区欢迎(格式长寿的标志)。已有部分相机厂商直接采用 DNG 作为 RAW 格式。最重要的是,Adobe 作为图形软件巨头自然会全力支持自己的发明。DNG 是个完美的存档方案,原始传感器数据以 TIFF 格式封装在 DNG 中,规避了专有 RAW 格式的风险。总的来说,换操作系统时完全不用担心兼容性问题。

XML for Extensible Mark-up Language or RDF for Resource Description Framework. XML is like HTML, but where HTML is mostly concerned with the presentation of data, XML is concerned with the representation of data. On top of that, XML is non-proprietary, operating-system-independent, fairly simple to interpret, text-based and cheap. RDF is the WC3’s solution to integrate a variety of different applications such as library catalogs, world-wide directories, news feeds, software, as well as collections of music, images, and events using XML as an interchange syntax. Together the specifications provide a method that uses a lightweight ontology based on the Dublin Core which also supports the “Semantic Web” (easy exchange of knowledge on the Web).

IPTC 转向 XMP¶

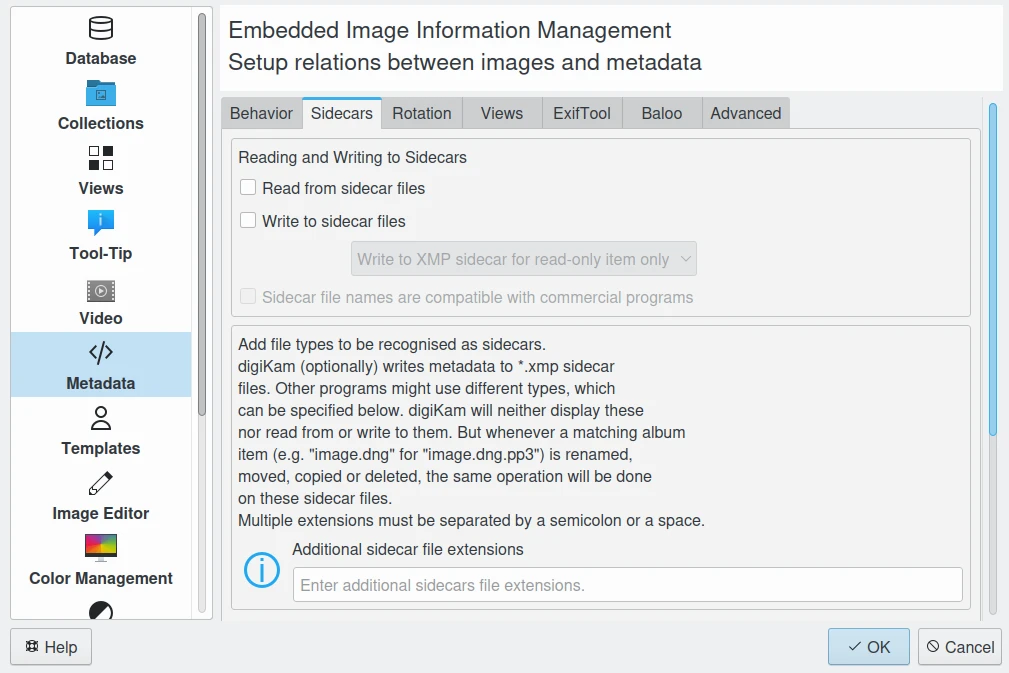

这大概就是为什么 Adobe 在 2001 年左右推出了基于 XML 的 XMP 技术,取代了九十年代的 图像资源块 技术。XMP 全称是 可扩展元数据平台 (Extensible Metadata Platform),融合了 XML 和 RDF 技术。这是一种标签技术,能让用户把文件的相关数据直接嵌入到文件本身里。这些文件信息会以 *.xmp* 扩展名保存(表示使用了 XML/RDF 格式)。

XMP 的优势:就像 ODF 格式永远可读(因为里面的内容都是纯文本),XMP 也能把你的元数据以清晰易懂的 XML 格式保存下来。完全不用担心以后读不出来。这些数据可以直接嵌入图像文件,也可以作为单独的附带文件保存(Adobe 管这个叫 Sidecar 文件)。XMP 可以用在 PDF、JPEG、JPEG2000、GIF、PNG、HTML、TIFF、Adobe Illustrator、PSD、Postscript、Encapsulated Postscript 和视频文件里。在 JPEG 文件里,XMP 信息通常和 Exif、IPTC 数据放在一起。

digiKam 可以显示图像和视频中的 XMP 内容¶

把元数据直接嵌入图像文件,就能轻松跨产品、跨厂商、跨平台、跨用户分享和传输文件,再也不用担心元数据丢失。XMP 数据里最常见的元数据标签都来自都柏林核心元数据计划(Dublin Core Metadata Initiative),包括标题、描述、创作者这些信息。这个标准设计时就是可扩展的,用户可以往 XMP 数据里添加自定义的元数据类型。不过 XMP 一般不允许嵌入二进制数据类型,这意味着如果想在 XMP 里放缩略图这类二进制数据,就得先转成 Base-64 这类 XML 友好的格式。

很多摄影师喜欢保留原始拍摄文件(主要是 RAW 格式)作为存档。XMP 很适合这种做法,因为它将元数据和图像文件分开保存。但我们不认同这种观点——元数据文件和图像文件的关联可能会出问题,而且正如前文所说,RAW 格式终将被淘汰。我们推荐使用 DNG 作为容器格式,把所有内容都打包进单个文件。

都柏林核心元数据计划(Dublin Core Metadata Initiative) 是一个致力于开发通用在线元数据标准的开放组织。该组织的重点工作包括:元数据架构设计与建模,通过社区和专项工作组开展协作,举办年度会议和研讨会,推进标准对接,以及开展元数据标准普及教育。

digiKam 支持通过设置面板配置多种Sidecar文件选项¶

数据保护指南¶

使用符合 UL 1449 标准的电涌保护器,最好搭配 UPS 不间断电源

采用 ECC 内存自动纠正存储错误(即使是普通文件保存时)

监控硬盘状态(温度、异响等),定期备份

异地存放备份介质(建议上锁保管),结合云存储空间

选用专业存档介质和刻录设备

遭遇数据丢失时保持冷静,能用通俗语言向非专业人士解释恢复方案

选择便于扩展的文件系统、分区和文件夹结构

采用开放的非私有标准管理照片

至少每5年进行一次技术/迁移评估